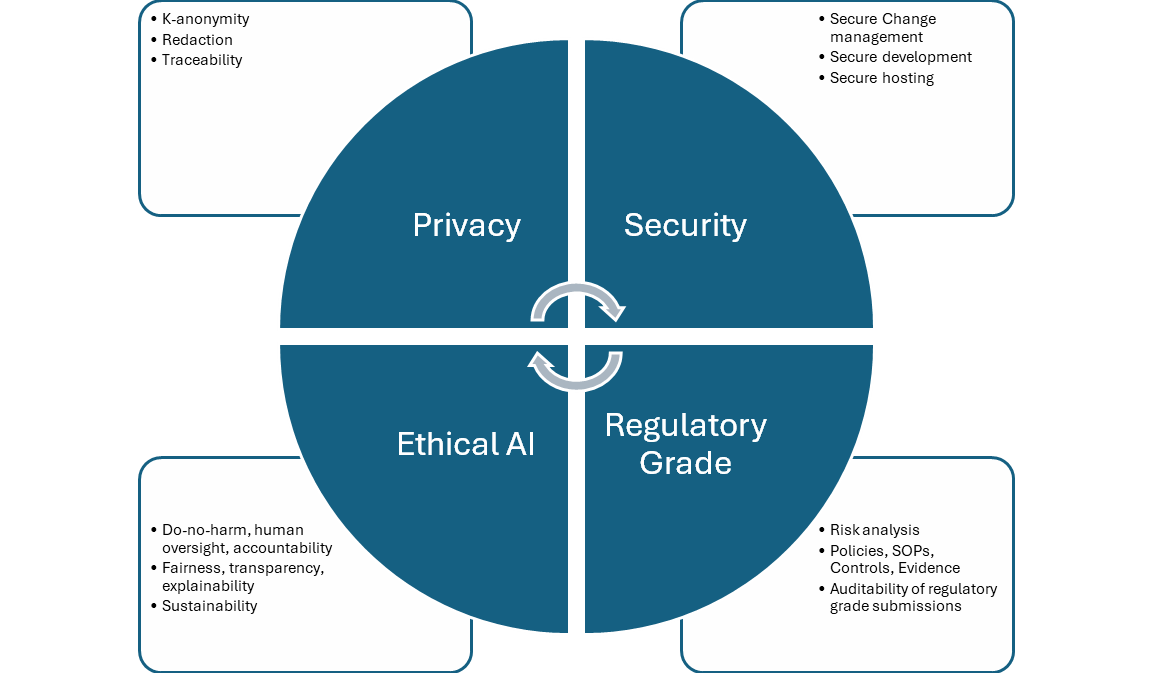

There are four aspects to compliance in healthcare analytics which are governed by distinct but interrelated regulatory standards and recommendations. They apply broadly to all engineering systems, particularly to AI, which is our focus here.

Privacy

At the same time, HIPAA recognizes the value of using de-identified health data for research. When PHI is de-identified in accordance with HIPAA, the risk that any patient could be identified is very small.

The HIPAA Privacy Rule sets the standard for de-identification. Once the standard is satisfied, the resulting data is no longer considered to be PHI and can be disclosed outside a health system’s network and used for research purposes. The Privacy Rule provides two options for de-identification: Safe Harbor and Expert Determination. Truveta uses the Expert Determination method to de-identify data. Truveta works with experts who have experience in making Expert Determination in accordance with the Office for Civil Rights (OCR) – the government division within the US Department of Health and Human Services (HHS) responsible for HIPAA enforcement.

Redaction

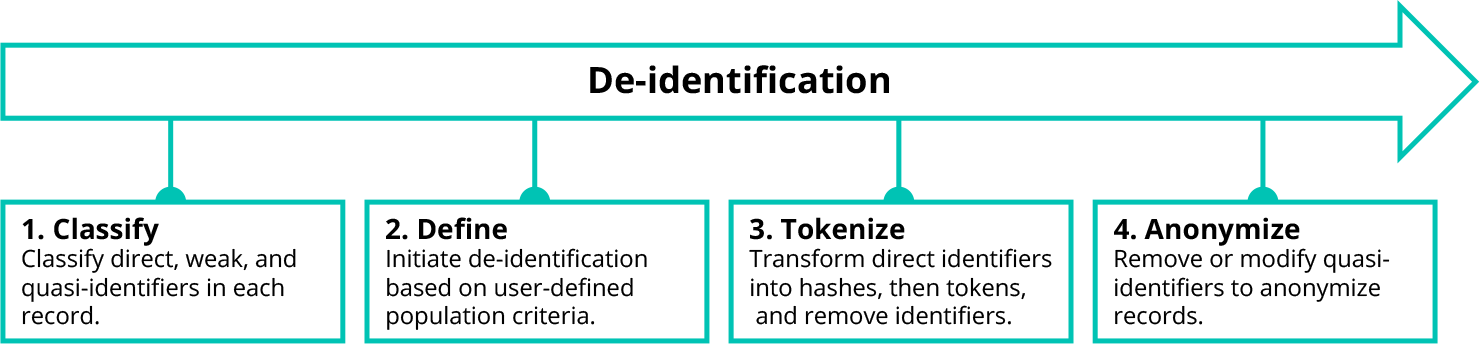

De-identification

Unlike direct identifiers, weak identifiers and quasi-identifiers do not always need to be removed to preserve patient privacy. In many cases, weak identifiers and quasi-identifiers only need to be modified, replacing specific data points with less precise values.

To drive this process, Truveta uses a well-known de-identification technique called k-anonymity. We modify or remove weak identifiers and quasi-identifiers in a data set to create groups (called equivalence classes) in which at least k records look the same. The higher the k-anonymity value, the lower risk of matching a patient to a record. For this reason, we look across all health systems when building equivalence classes to provide the maximum privacy benefit and minimize the suppression effects on research.

De-identification may still suppress entire patient records or specific fields which may be of interest to a researcher. To minimize these effects, the researcher can configure the de-identification process for their study, ensuring that tradeoffs in fidelity and priority for specific weak or quasi-identifiers also meet their study goals.

Watermarking and fingerprinting

To learn more about Truveta’s commitment to privacy, you can read our whitepaper on our approach to protecting patient privacy.

Security

Development & operations (DevOps) – general security principles

We operate in accordance with a set of security principles that apply broadly to software engineering and infrastructure DevOps:

- Development in secure environments

- Codebase and pull-requests (PRs) are orchestrated through Azure DevOps (ADO)

- Change management with approvals required at all stages

- Deployment progress through development (DEV), integration (INT), production (PROD) rings

- Automated validation suites, conformant with rated risk and impact of proposed changes

There are some specializations of these principles to AI development and operations, as discussed below.

Secure AI model development

Data

Libraries, frameworks, tools, and open–source models

Secure development zone

Secure model hosting (ML Operations – MLOps)

To learn more about our commitment to data security, read our whitepaper on this topic.

Regulatory-grade quality

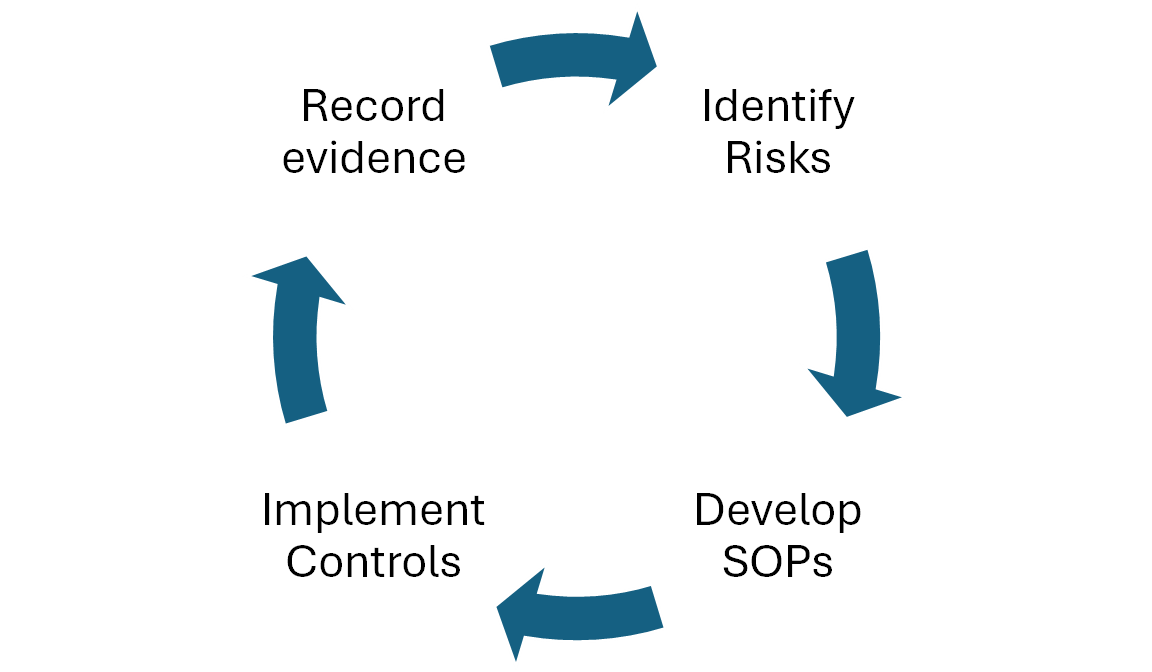

As with the other compliance needs, this applies to all engineering components from the point of data ingestion to research platform in studio. We designed hundreds of product and process improvements to exceed FDA regulatory expectations, implemented adequate process and procedure controls aligned with FDA guidance, and created standard operating procedures (SOPs). Continuous monitoring and evidence logging ensure the system complies with these standards and that our customers can prove the integrity of the data and documentation included in their regulatory submission. For AI specifically, we have SOPs for model development and hosting, controls in the form of DQRs and certifications, and the evidence is recorded in the Quality Management System (QMS). The models in production when a data snapshot is taken by a customer can be unambiguously identified and their quality reports and certifications can be provided as evidence of regulatory-grade operation.

Truveta Data has been endorsed as research-ready for regulatory submissions, including key areas of investment such as a state-of-the-art data quality management system (QMS), third-party system audits by regulatory experts, and industry-leading security and privacy certifications.

Ethical AI

- Proportionality and do-no-harm: For our use cases, this means the extraction and mapping of clinical terms should improve usability of our data for research, without adding false knowledge. We assure this through rigorous quality evaluations and fit-for-purpose evaluations.

- Safety and security: Secure AI development and hosting, as described above.

- Fairness: The aim is to ensure the system does not add or strengthen systemic bias. To achieve this, we purposefully avoid using demographic features of patients in the data harmonization models, and we use only redacted and de-id data for model development.

- Privacy: We are compliant with HIPAA requirements as described above.

- Accountability, transparency and explainability: As discussed in my previous blog post “Delivering accuracy and explainability”, we follow a rigorous system of quality assurance and monitoring for our models. Wherever models have low confidence, they choose not to provide an answer rather than risking providing a wrong answer.

- Sustainability: As discussed in previous blog post “Scaling AI models for growth”, we heavily use an agentic framework built out of relatively smaller models that are more sustainable to train and host.

- Human oversight: In Truveta’s data pipeline, AI models often aid human operators (for example providing recommendations for mappings), and always use human experts for ground truth and continuous error monitoring. Furthermore, human operators can always override AI actions. Hence there is continuous human oversight of the AI-driven data harmonization system.